Fintech AI Review Volume #3

Hope and hype in equal measure, the state of GPT, and the shrinking gap between ideas and creative execution

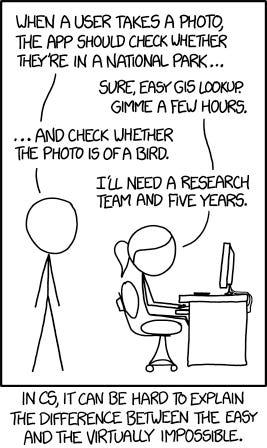

There’s a great xkcd cartoon about how in computer science, it’s sometimes hard to explain the difference between problems that are easy and those that are extremely difficult1. It feels like we’re very much at that stage with regard to the applications for AI in fintech.

On one hand, AI tools that are in production and widely accessible today are capable of truly impressive feats, not only automating routine tasks but also performing work previously considered to be solely the province of human creativity. On the other hand, generative AI is a new and rapidly-changing field, and there are plenty of things it just doesn’t do yet. Language models can make up information that has no basis in fact, and they can often be wrong in surprising ways.

This week’s newsletter dives into several examples of the opportunity for AI in financial services, but also the cost, uncertainty, and potential pitfalls. Nvidia’s CEO spoke about how Generative AI is already decreasing the distance between ideas and creative execution across a variety of fields. AI has the potential to expand access to financial services, and it has multiple straightforward potential applications in the insurance industry. The new wave of AI may even usher in a new era of human rights, as has occurred with previous technological revolutions.

There’s also concern about a lack of AI developer talent, whether smaller financial institutions can afford to keep up with a potential technological arms race, and how large language models will (among other things) create a lot of new and interesting ways to make mistakes in finance.

At a time where there is legitimately both hope and hype in equal measure, it’s valuable to gain a deeper understanding of the technology and how it works. Those exploring the use of AI in fintech will find it useful to understand the current capabilities of GPT models, and there’s a link to a video from Andrej Karpathy, who explains it better than anyone I’ve seen.

As always, please share your thoughts, ideas, comments, and any interesting content. Happy reading!

Latest News and Commentary

Who's going to work on all these AI projects at the big banks? - Business Insider

Executives of large financial institutions are understandably wide-eyed about potential use cases for generative AI. While there’s no shortage of compelling applications and projects being proposed, one of the biggest issues for banks seems to be competition for the talent to actually build them. Given the rapid pace of AI advancement, it’s no surprise that the relatively few engineering practitioners truly on the cutting edge have their choice of employment prospects, making both recruitment and retention challenging. It will be interesting to see how many of big banks’ proposed AI initiatives come to fruition, and which don’t due to lack of talent. In my experience, however, there are some incredibly talented engineers in the financial world, so perhaps banks would do well to up-skill their top talent on the latest AI technologies. I’d guess that prioritization, autonomy, and access to computing resources and data will be the actual determinants of AI project success for big banks, more so than lack of people.

'Everyone is a programmer' with generative A.I., says Nvidia chief - CNBC

Nvidia CEO Jensen Huang described generative AI as the most important computing platform of our generation. He spoke of the ability to use natural language prompts to accomplish what could previously be done only through hand-written code, as well as the ability to use multimodality to understand more than just text and numbers, and how this will unlock a huge new chapter in human creativity. Already, tools like ChatGPT and Midjourney are able to generate truly impressive work from fairly simple text prompts, and we’re really just getting started. Generative AI is collapsing the distance between idea and creation, as so many tools have done across human history (though now with an adoption curve many orders of magnitude steeper). “Everyone is a programmer” is one way to think about this collapsing distance, though I think the more concrete implication is that the nature of what it means to be a programmer will change. The combination of creative problem solving, logical reasoning, and ability to think at multiple levels of abstraction is still a valuable human skill. Perhaps generative AI, and LLMs specifically, make these traits even more productive on their own without requiring as much syntactic fluency. Some hope for the banks that can’t hire enough AI developers…

State of GPT - Andrej Karpathy @ Microsoft Build

Elite AI developer Andrej Karpathy, one of the founding engineers at OpenAI, gave a fantastic talk at Microsoft’s developer conference on the state of GPT. It’s 42 incredibly informative minutes of content, presented in a way that’s accessible even to non-experts. He discusses how LLMs are trained and the various phases of fine-tuning required to build a helpful ‘AI assistant’, including the vast amount of human labor involved. He explains helpful ways to consider how LLMs ‘think’ and how that compares to human thought, and he shares recommendations for how to most effectively use GPT models in practice. The talk is so educational, accessible, and well presented that it basically eludes summary. It’s very much worth your time to watch the whole thing.

National Bankers Association president Nicole Elam opined on the opportunities and risks of AI in banking at the recent Milken Institute Global Conference. In general, she touted the potential of AI and digital banking in general to increase access to capital and better meet the service expectations of consumers. She expressed concern, however, about the cost of adopting newer technologies, particularly for smaller institutions without large technology budgets, as well as potential compliance issues that can arise from newer and less-well-understood tools. Overall, automation has been shown to enable greater access to capital, and institutions adopting automated processes end up serving more diverse borrower populations. In fact, I’m a co-author on a paper that demonstrates this using data from the Paycheck Protection Program. The cost issue is certainly a concern, as the U.S. has a large and highly-fragmented banking system, with thousands of banks and credit unions. While large banks typically have massive technology budgets and correspondingly good digital consumer banking experiences, many smaller banks do not. Given the cost and talent required to adopt AI for productive use, there’s a risk that AI adoption in financial services will be highly uneven, perhaps leading to more consolidation and making the biggest banks even bigger.

Generative AI is Coming for Insurance - A16Z Fintech

A16Z Fintech partner Joe Schmidt muses on the applicability of large language models in various insurance use cases. He outlines opportunities in underwriting/decisioning, as well as in sales and servicing, and discusses why LLMs may prove effective in areas where previous machine learning technologies have not. The examples make a lot of sense, and many are likely possible with today’s generally-available AI technology, particularly in less complex areas of insurance like auto and home. It will be interesting to see how existing insurance incumbents adopt AI tech to improve parts of their existing businesses vs. how a new, vertically-specific insurance startup could build in an AI-first way from the beginning.

Technology Will Change The World - Will The World Change With It? - Forbes

Nik Milanovic, fintech aficionado and founder of This Week in Fintech, wrote a comprehensive piece in Forbes explaining the potential transformation from AI in the context of 5 prior technological platform shifts over the past couple hundred years. As Nik notes, every technological revolution has made some jobs obsolete, created others that nobody previously envisioned, and has materially improved standards of living across the globe. Most interestingly, he considers the potential for utilizing the benefits from AI to create a national program for Universal Basic Income (UBI). UBI is controversial, and I’m by default skeptical of the concept for many reasons. However, Nik makes an interesting and compelling case that, “Major economic shifts throughout history such as the industrial revolution and mass electrification led to corresponding shifts in the basic rights and protections afforded to people in those societies as a result.” This is a thought-provoking read on one of the many policy questions that will undoubtedly arise as the applications of AI materialize.

Generative AI enhances alternative data lending opportunity - Fintech Nexus

Isabelle Castro Margaroli from Fintech Nexus interviewed Ocrolus (disclaimer: I work at Ocrolus) CEO Sam Bobley about the use of AI to power the company’s document automation platform, used by hundreds of lenders across multiple verticals, including small business and mortgage lending. Sam explains how Ocrolus has used multiple automation technologies since the company’s inception in 2014, including proprietary AI derived from its massive document dataset and ability to produce high-quality labeled training data in-house. It combines this technology with best-of-breed AI tech from Amazon, Google, and OpenAI to offer an end-to-end document automation and analysis platform that helps lenders to make high quality credit and fraud decisions with trusted data.

Money Stuff: AI Bots Are Coming to Finance - Bloomberg

In his typically witty style, Matt Levine comments on several examples of AI being used in finance, including in risk modeling, financial engineering, trading, and customer service. He references a statistic that at some large banks, 40% of listed job openings are for AI-related hires. He also details a few examples of questionable judgment in the use of AI. For instance, if a bank uses generative AI to suggest information that needs to be reported to regulators, can a bank accept those recommendations? Will regulators accept it? Also, LLMs are known to occasionally ‘hallucinate’ (i.e. confidently state incorrect, made-up information). Of course, humans often exhibit the same behavior! But if an AI system does this and then makes financial decisions based on it, it’s interesting to think of the implications. He somewhat hilariously considers all the interesting new ways of making mistakes in finance that will be introduced by AI: “New ways to be wrong!” These all provide good evidence for following one of the recommendations made by Andrej Karpathy in his GPT keynote: “Use them as a source of inspiration and suggestions, and think co-pilots, instead of completely autonomous agents…it’s just not clear that the models are there right now.”

Interestingly, this xkcd is from 2014. Thanks to subsequent advances in image detection AI, the example given in the cartoon is now extremely trivial, but the meta-point is still valid.